You’ve likely encountered the siren song of “optimization.” It’s a promise of peak performance, of shaving off every sliver of inefficiency until your processes, your code, your marketing campaigns, or even your digital presence hums with an almost supernatural grace. But like a ship too heavily laden with treasures, if you push optimization too far, you risk capsizing your entire endeavor. This is the domain of over-optimization, a dangerous territory where the pursuit of marginal gains can lead to significant losses.

The Illusion of Perpetual Improvement

You might see optimization as climbing a mountain. Each step brings you closer to the summit, to a clearer view, to a more efficient existence. And for a while, this metaphor holds true. You identify bottlenecks, streamline workflows, and eliminate redundancies. You celebrate each increment of improvement, feeling the satisfying click of a problem solved, a resource freed.

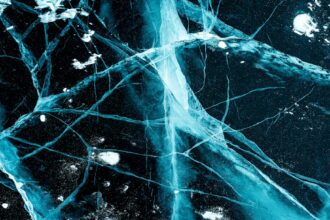

However, the mountain of optimization is not a uniform incline. There are plateaus, false summits, and eventually, points where the air becomes too thin to breathe. Pushing beyond these natural limits, continuing to chisel away at minuscule inefficiencies, is where you enter the realm of over-optimization. It’s akin to trying to extract water from a stone; you expend immense effort for negligible returns, and in the process, you might crack the very foundation of the stone.

This can manifest in various ways. In software development, it means spending days perfecting a function that will only shave milliseconds off its execution time, time that could have been spent developing a new feature or fixing a more critical bug. In marketing, it’s A/B testing every single element of an ad campaign down to the millimeter of a button’s placement, in the hope of a 0.01% conversion rate increase, while neglecting the fundamental message.

You must understand that diminishing returns are an inherent part of any optimization process. At some point, the cost of further optimization in terms of time, resources, and complexity outweighs the benefit. Recognizing this point is crucial to avoiding the pitfalls of over-optimization.

Identifying the Point of Diminishing Returns

- The Cost-Benefit Analysis Black Hole: You find yourself locked in a cycle where each planned optimization requires more analysis and more effort than the last. The projected gains become increasingly theoretical, and the time spent in contemplation of improvement far exceeds the time spent implementing it. This is a stark warning sign.

- The “Good Enough” Threshold: There exists a point where your system or process is performing adequately, meeting its objectives, and providing a positive return on investment. Pushing beyond this “good enough” threshold for perfection can be a slippery slope.

- The Anecdotal Evidence Trap: You start relying on anecdotal evidence or gut feelings about potential improvements rather than robust data. The desire for more is fueled by a belief that “just a little bit more” will make a significant difference, even when the data suggests otherwise.

To effectively navigate the complexities of digital marketing, it’s crucial to understand how to avoid over-optimization traps that can hinder your website’s performance. A related article that delves deeper into this topic is available at Productive Patty, where you can find valuable insights and strategies to maintain a balanced approach to optimization. By following the guidance provided, you can enhance your site’s visibility without falling into common pitfalls that may arise from excessive tweaking.

The Rise of Complexity: A Tangled Web

One of the most insidious consequences of over-optimization is the unintended rise of complexity. When you relentlessly refine and tweak, you often introduce layers of intricate logic and specialized solutions. What begins as a simple, elegant system can become a Gordian knot, impossibly tangled and difficult to manage.

Imagine you are trying to optimize a simple recipe. You might start by perfecting the cooking time of each ingredient to the millisecond. Then you might analyze the molecular structure of each component to ensure optimal interaction. You could even delve into the precise temperature fluctuations within your kitchen’s ventilation system to ensure the air composition is ideal for flavor development. While each of these steps might, in isolation, yield a tiny improvement, the sum of these efforts creates a highly complex and fragile process.

This complexity makes your system brittle. A small change in one area can have unforeseen and cascading negative effects in others. Debugging becomes a Herculean task, as you must untangle the web of intricate dependencies. Furthermore, onboarding new team members or handing over the project to someone else becomes incredibly challenging. They will need to learn not just the core functionality but also the labyrinthine logic that underpins your relentlessly optimized creation. This hidden cost of complexity is often underestimated.

The Cascading Dependencies

- Interconnectedness Becomes a Minefield: Every seemingly minor tweak creates new interdependencies. You’re not just optimizing a part; you’re creating a new junction in a growing network, and any strain on that junction can ripple through the entire system.

- Fragility Through Specialization: In an effort to achieve peak performance in a specific area, you might create highly specialized solutions. These solutions are often less robust and more prone to failure when faced with unexpected inputs or changing environmental conditions.

- The “Feature Creep” of Optimization: Just as feature creep can bloat software, “optimization creep” can bloat your processes. Each new micro-optimization becomes another feature, adding to the overall complexity without necessarily adding proportionate value.

The Erosion of Maintainability and Adaptability

A system that has been over-optimized often becomes a monument to its past self, rather than a living, breathing entity capable of evolving. Maintainability and adaptability are often the first casualties. When you’ve painstakingly crafted every element for maximum efficiency under specific conditions, introducing changes becomes a monumental undertaking.

Think of a finely tuned race car. For a specific track, it might be the fastest machine imaginable. However, if you suddenly need to navigate bumpy terrain or changing weather, that finely tuned machine will struggle. Its specialized components, optimized for speed on a perfect surface, will be its undoing.

Similarly, your over-optimized software might perform brilliantly under its intended load, but introduce a new user demographic with slightly different usage patterns, and the entire structure might groan under the strain. Updates become risky propositions. Instead of a simple patch, you might need to re-evaluate and potentially re-optimize large sections of the system, a process that is both time-consuming and prone to introducing new errors. The agility that optimization is supposed to foster can be lost, replaced by a rigid, high-performance shell.

The Rigidity of Precision

- The “If It Ain’t Broke, Don’t Fix It” Paradox: Ironically, the very success of your optimization can create a reluctance to touch anything for fear of breaking the delicate balance. This leads to stagnation, where valuable improvements are avoided due to the perceived risk.

- The Cost of Backward Compatibility: As you introduce new optimized versions, retrofitting older components or ensuring compatibility with legacy systems becomes a significant engineering challenge. This can create technical debt that hinders future development.

- The Inability to Pivot: In a dynamic environment, the ability to adapt and pivot is crucial. An over-optimized system is often so tightly coupled and specialized that significant changes in direction become prohibitively difficult and expensive, effectively locking you into a specific path.

The Cost of Unforeseen Consequences: The Butterfly Effect

The pursuit of perfect efficiency can sometimes trigger unforeseen consequences, akin to the butterfly effect where a small change in one place can lead to distant and dramatic outcomes. You focus so intently on a specific metric, a particular performance indicator, that you overlook the broader implications.

Consider a scenario where you relentlessly optimize a website’s loading speed by aggressive caching and server-side rendering. While your page load times might plummet, you might inadvertently create a less dynamic user experience. Perhaps real-time updates are delayed, or certain interactive elements function less smoothly because the system is prioritizing raw speed over immediate responsiveness. The user, while experiencing a faster initial load, might find the overall interaction clunky, leading to frustration and a lower engagement rate.

This highlights the danger of optimizing for a single variable in isolation. Systems are complex ecosystems, and changes in one area can ripple through, affecting other aspects you may not have even considered. The pursuit of a single, shining metric can cast a long shadow over other equally important aspects of your operation or product.

The Blind Spots of Focused Improvement

- The Single-Metric Obsession: You become fixated on one quantifiable metric, letting it blind you to other qualitative or less easily measured aspects of performance, such as user satisfaction, robustness, or security.

- The Neglect of the Human Element: Optimization often focuses on technical efficiency. This can lead to the neglect of the human element – the user’s experience, the developer’s sanity, or the support team’s workload.

- The Unaccounted-For External Factors: You might optimize for ideal conditions, failing to account for fluctuating network speeds, diverse hardware, or varying user skill levels. This can lead to a system that performs exceptionally well in controlled environments but falters in the real world.

When navigating the complexities of digital marketing, it’s essential to be aware of the potential pitfalls of over-optimization. A related article that offers valuable insights on this topic can be found at Productive Patty, where you can learn effective strategies to maintain a balanced approach in your optimization efforts. By understanding the nuances of search engine algorithms and user experience, you can avoid common traps that may hinder your online presence.

Reclaiming Balance: The Art of “Good Enough”

The antidote to over-optimization is not the abandonment of improvement, but the embrace of balance. It’s about understanding that true success lies not in pushing for unattainable perfection, but in achieving a state of robust, adaptable, and maintainable efficiency. This is the art of finding the “good enough” – that sweet spot where your system or process performs exceptionally well, meets all its objectives, and does so without succumbing to crippling complexity or fragility.

Think of a well-crafted tool. It is sharp, strong, and fits comfortably in your hand. It performs its intended task with precision. However, it does not need to be made of an impossibly rare alloy or have microscopic tolerances that require specialized calibration every hour. It is durable, reliable, and serves its purpose effectively. This is a tool that has been optimized, but not over-optimized.

To achieve this balance, you must cultivate a pragmatic mindset. Regularly step back from the granular details and assess the overall health and trajectory of your project. Ask yourself if the marginal gains you’re chasing are truly worth the investment of resources and the introduction of potential complexity. Prioritize changes that offer significant, demonstrable improvements rather than chasing incremental optimizations that yield diminishing returns.

The Pragmatic Approach to Optimization

- Embrace Iterative Improvement: Instead of aiming for a single, perfect state, focus on continuous, iterative improvement. Release functional solutions that are good enough, and then gather feedback and data to make further, targeted enhancements.

- Prioritize Simplicity and Readability: When faced with multiple optimization options, favor the one that maintains the greatest degree of simplicity and readability. A slightly less performant but easily understood solution is often more valuable in the long run.

- Know When to Stop: This is perhaps the most crucial skill. Develop a clear set of criteria for when an optimization process is complete. This might involve hitting a predefined performance target, exhausting all significant avenues for improvement, or simply reaching a point where further effort is no longer cost-effective.

- Value Maintainability Over Absolute Performance: Unless you are operating in an extremely niche, performance-critical domain, prioritize maintainability and adaptability over achieving the absolute theoretical maximum performance. A system that can be easily updated and adapted will ultimately provide more long-term value.

- Listen to Your Users and Stakeholders: Their feedback often provides invaluable insights into what truly matters. Sometimes, the most “optimized” solution from a purely technical standpoint is not the one that best serves the needs of your users or the objectives of your project.

By understanding these pitfalls and actively working to maintain balance, you can steer clear of the treacherous waters of over-optimization, navigating your projects towards sustainable success rather than a spectacular, complex, and ultimately unsustainable crash.

FAQs

What is over-optimization in SEO?

Over-optimization in SEO refers to the excessive use of optimization techniques, such as keyword stuffing, unnatural link building, or repetitive content, which can lead to penalties from search engines and negatively impact a website’s ranking.

How can I identify if my website is over-optimized?

Signs of over-optimization include unnatural keyword density, excessive internal or external links, duplicate content, and sudden drops in search engine rankings. Using SEO audit tools can help detect these issues.

What are common over-optimization traps to avoid?

Common traps include keyword stuffing, overuse of exact match anchor text, creating low-quality backlinks, duplicating content excessively, and ignoring user experience in favor of search engine algorithms.

How can I avoid over-optimization while optimizing my website?

Focus on creating high-quality, relevant content for users, use keywords naturally, diversify anchor text, build genuine backlinks, and regularly monitor your SEO performance to ensure compliance with search engine guidelines.

Why is avoiding over-optimization important for long-term SEO success?

Avoiding over-optimization helps maintain a website’s credibility with search engines, prevents penalties, ensures sustainable traffic growth, and provides a better user experience, all of which contribute to long-term SEO success.